The Terafab Moment: Seven Lenses from Factory Floor to Civilizational Infrastructure

On the Semiconductor Renaissance and the Coming Governance Capability Overhaul

Preamble

This past week Elon Musk announced his Terafab project in Austin, Texas, a joint Tesla-SpaceX-xAI semiconductor fab at Giga Texas, meant to enable end-to-end chip production from mask-making to testing in one facility for rapid design iteration. Musk frames this not as an incremental R&D initiative but as a brute-force solution to the terrestrial supply chain ceiling: even combining the output of TSMC, Samsung, and Micron, the world produces barely 2% of the compute required for his envisioned terawatt-scale AI infrastructure. Terafab represents both a technical and strategic pivot, a proprietary pipeline built to bypass the limits of conventional chip fabrication and scale compute, apparently “the most epic chip-building exercise in history by far”.

Musk contextualizes Terafab within a broader framework of space-optimized energy and compute infrastructure, where off-world deployment overcomes terrestrial constraints like regulatory friction, limited real estate/NIMBY, and utility costs. The project targets one terawatt per year of compute power, but Tesla’s limited fab experience raise execution risks, akin to delays in other Musk-led ventures.

But whether or not Terafab is ever delivered, it is evident that the United States is positioning itself to lead tomorrow’s economic stacks. The nation is betting on brute-force efficacy to literally compute its way into an automated, post-scarcity[ish] future. With numerous concurrent initiatives underway—TSMC and Intel on Arizona, Samsung and TI in Texas, and other expansions in New York and Ohio—America’s semiconductor renaissance is unmistakably in full swing.

AI-seasoned policymakers, analysts, and scholars can surely paste Musk's video into an LLM like Grok or Gemini and ask for a PMESII-PT breakdown for a serviceable geopolitical overview. This essay does something different, it uses the Terafab announcement as an entry point into a deeper composite argument: that the semiconductor renaissance is not merely reshaping supply chains or great-power competition, but imposing a fundamental refactoring of statecraft itself. The seven lenses that follow move deliberately from business-level disruption to civilizational consequence, arriving at a framework for what I call here the coming governance capability overhaul. They are best read as a sequence, not a menu.

Lens I - Corporate Path-Dependency & GVC Theory: The Violent Unwinding of the Fabless Gospel

For decades, the semiconductor industry worshiped at the altar of the “fabless” model. Companies like Apple, NVIDIA, and AMD designed the chips, while TSMC, Samsung et al., handled the messy, capital-intensive reality of actually making them. Through the lens of Gereffi’s Global Value Chain (GVC) theory, this created a highly optimized but deeply brittle “producer-driven” network where the structural lock-in was absolute: the West largely outsourced the atoms to sustain the high-margin from bits.

And Musk’s Terafab in Austin is a violent rejection of this historical institutionalism.

He explicitly noted that his current suppliers have a “maximum rate at which they’re comfortable expanding,” a polite way of saying their terrestrial, path-dependent business models can’t scale to his compute needs. By integrating mask-making, logic, memory, packaging, and testing under a single roof to enable a “recursive loop” of rapid design iteration, Musk is internalizing the entire GVC. He isn’t waiting for geopolitical shocks to slowly reshore supply chains, no, Musk is bypassing the lock-in entirely by building a “Musk-driven” chain, knowing that waiting for legacy foundries to scale to civilizational demands is a fool’s errand. Musk identified the bottleneck, built the solution, and secured a gatekeeping position. Checkmate.

Lens II - Geoeconomic Statecraft & Industrial Policy: A Privatized Mazzucato Moonshot

Current US industrial policy (e.g., the CHIPS Act/CSA) aims at “decoupling” from Asian supply chains to rebuild domestic resilience. But viewed through Mariana Mazzucato’s mission-oriented policy framework, Musk is executing a privatized, hyper-accelerated version of statecraft. When Musk thanked Texas Governor Greg Abbott at the Terafab presentation, this highlighted a symbiotic manipulation of geoeconomics: the state provides the incentives and regulatory cover for “reshoring,” but Musk’s endgame isn’t about bringing back 1950s blue-collar manufacturing jobs.

Instead, this is deliberate onboarding into “dark factory” automation. Musk’s projection of 10 billion Optimus humanoids by 2040 (powered by the edge-inference chips built at the Terafab?) signals that his decoupling strategy is largely reliant on robotic labor. He is weaponizing the US government’s desire for sovereign supply chains to subsidize the creation of a closed-loop, automated manufacturing ecosystem. Statecraft is merely serving as the venture capital for corporate craft, an attempt at sovereign, vertically integrated compute independence. De facto, SpaceX/xAI ceases to be a state proxy and becomes a peer to the nation-state.

Lens III - Schumpeterian Re-capitalization: The Revenge of Atoms Over Bits

For the past two decades, venture capital was obsessed with software. But if AI automates 90%+ software maintenance and generation, the bottleneck becomes compute and power—not talent, not manpower—demanding an offramp from a hard thermodynamic ceiling that terrestrial grids already straining under current datacenter loads simply cannot break without decades of nuclear deployment.

Musk’s announcement signals a brutal Schumpeterian gale of creative destruction, reallocating capital from code to physical atoms. The SaaS era was a cute warm-up but the new Kondratiev wave rides on heavy metal, silicon, and rocket fuel. Building a terawatt of compute requires launching multi-million tons of payload to orbit/year and that’s one profound paradigm shift over the industrial logistics, thermodynamics, and materials science problems of AI. Musk is betting the companies leading the AI race won’t be just those with the best code, but those with access to the most ruthless mastery of physical bottlenecks and energy capture.

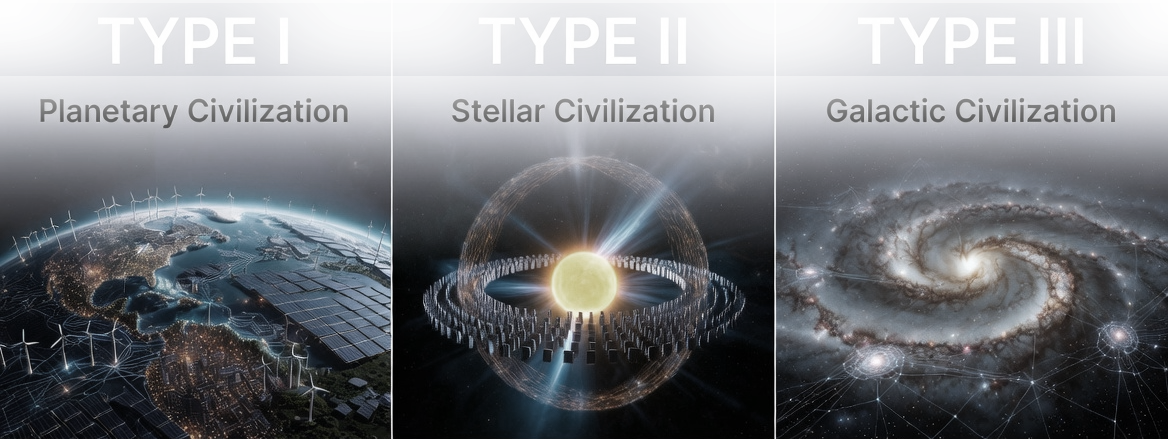

Lens IV - Securitization & Techno-Nationalist Dynamics: Hyperstition and the Kardashev Threat

In the framework of the Copenhagen School (Buzan and Wæver), a security threat is constructed through “speech acts.” While Washington securitizes semiconductors by pointing to the threat of a Chinese invasion of Taiwan, Musk elevates the threat narrative to a cosmic scale. He securitizes human survival and the limits of the terrestrial energy grid, constantly referencing the Kardashev scale and pointing out that Earth captures only a “billionth” of the Sun’s energy.

And right now, this registers as pure hyperstition—the act of bringing a fiction into reality by making people believe in it. By explicitly citing sci-fi authors like Iain M. Banks and Robert Heinlein, Musk is hacking techno-nationalist panic. He sidesteps a mundane “China threat” and replaces it with the existential dread of remaining a “tiny dust mote in a vast darkness.” By narrating the absolute necessity of off-world compute to save mankind, he wills the capital, engineering talent, and political support into existence. The narrative of scarcity on Earth becomes the self-fulfilling driver for his planned expansion into space.

The speech act operates on three distinct registers simultaneously: it mobilizes capital markets by reframing compute scarcity as existential rather than cyclical; it recruits engineering talent by elevating the work from product development to civilizational mission; and it pre-empts congressional oversight by making terrestrial regulatory friction appear not merely inefficient but cosmically irresponsible. The genius of the Kardashev frame is that it makes every institutional interlocutor feel like the small actor in the room.

Lens V - Musk’s Space Monopoly: Zoning the Off-World Economy

If we look closely at Musk’s roadmap—specifically his claim that space-based AI compute will be cheaper than terrestrial AI in just 2 to 3 years due to free solar energy and vacuum cooling—we see the ultimate vertical monopoly taking shape.

While other billionaires are treating space as a tourism destination or a glorified telecom relay, Musk is zoning low Earth orbit & the Moon for commercial real estate and heavy industry. If the future of AI requires space-based datacenters, the Musk conglomerate is well set to lead on the Time-to-Market/TTM resources to pull it off. He owns the logistics (SpaceX Starship), the labor force (Tesla Optimus), the AI models (xAI) and now the compute chain (Terafab). Give this man access to ISRU closed biosphere systems and our grandchildren may refer to X Æ A-Xii as great leader*, heir of the first privately driven, off-world industrial ecosystems.

By planning an electromagnetic mass driver on the Moon to launch terawatt-scale compute into deep space, he is effectively building the foundational utilities of an off-world economy. If this bet pays off, any nation or corporation venturing into space will encounter a pre-existing layer of compute, logistics, and energy, one that extracts rent by design. Musk is positioning himself to intermediate, and ultimately price, the next stage of human expansion.

More critically, just as Tesla already operates one of the world’s largest real-world video and teleoperation data engines through its fleet and Optimus training rigs, this off-world ecosystem extends the advantage into orbit. And it would not simply rely on simulation, but accumulate first-order operational data from real space environments, establishing early dominance over spaceborne data collection, feedback loops, and control systems—conferring on xAI a data provenance no other AI lab can replicate or purchase.

* If we consider: A) early Mars settlements as private but strategically critical proxies of Earth-based states (in this case, a Musk-led, largely American-backed settlement) and B) X Æ A-Xii Musk inheriting command over a privately controlled, state-backed off-world ecosystem, an industrial dynasty is plausible in a very realpolitik way. Mars becomes a high-value node in a global system: attacks or challenges to control there cascade into Earth conflicts, making off-world power effectively geopolitically enforceable through proxies. Musk’s labor robots, ISRU, and infrastructure would further reduce the friction of sustaining this settlement independently, meaning the heir’s authority is materially backed, not just nominal.

Lens VI - What Vertical Integration Means for Everyone Else

If the preceding monopoly logic holds—one actor controlling a major share of the logistics, labor, compute design at orbital infrastructure—then the strategic landscape for all other players is not merely constrained but structurally predetermined. Our question is not whether middle powers can compete at AI’s foundational layer, but what rational adaptation looks like from here.

The answer is unambiguous: as the cost of intelligence collapses under US and Chinese hyper-scale investment, plugging into these cognitive grids via APIs is the economically rational move, given hardware sovereignty is dead on arrival for pretty much any state outside the G2. Attempts to build a sovereign TSMC competitor or AWS-equivalent from scratch are now no longer expressions of strategic autonomy but misallocations of scarce political capital that accelerate dependency by delaying adaptation.

The strategic pivot that follows is therefore not toward foundational infrastructure but toward higher layers of the stack where leverage, regulatory authority, and economic value remain attainable. Europe’s instinct here is structurally correct even when its execution is not.

The longer-term wildcard is whether AI-enabled automation eventually lowers barriers to entry sufficiently to allow late movers to leapfrog, perhaps plausible by 2035–2040 but highly contingent on how IP regimes, regulatory architectures, and the rule of law hold under the present conditions of intensifying power politics. What is not contingent is the cost of waiting: states that are not “AI-ready” by 2027 will not merely lag, they will have their dependency relationships calcified before the next window opens. Critically, in our near future, hegemons are not merely powerful states; most will be AI-powered states that also influence the very AI driving their supremacy—effectively, transgenerational behemoths charging a new cognitive tax from everyone else.

Lens VII - After AI Building AI, Machines Will Build Their Factories

Today we do not yet possess the technology to build a fab with robots. Fabricating a semiconductor facility remains one of the most complex engineering undertakings in existence, requiring thousands of highly skilled tradespeople—from pipefitters laying miles of water and hazardous gas lines, to electricians wiring massive power draws, and cleanroom specialists ensuring vibration isolation/optimal environmental control.

But if Musk’s trajectory toward increasingly capable android labor holds, why not wait until its technological readiness level stabilizes and then use these to construct new facilities, as over-investing in human-built fabs today, risks locking capital into infrastructure that may be rendered suboptimal or even obsolete by the next wave of automation? Do we require today’s chips to train tomorrow’s androids, or are we merely observing a knee-jerk reflex of the AI race imposing artificial (and securitized) urgency? The sole certainty under this dynamics is that delay risks forfeiting the compute race, stagnating model progress, and, in turn, delaying the very automation that might have justified waiting.

It’s a difficult conundrum: the velocity and depth of automation remain uncertain, yet strategic patience is itself a liability in an adversarial landscape. No serious actor can afford to wait for a cleaner technological equilibrium if competitors continue to advance. At the same time, sufficiently capable AI systems may not merely compete within existing paradigms but redefine them—allowing late movers, under the right—perhaps vastly automated—conditions, to leapfrog established leaders.

The Imposed Refactoring of Ordinary Statecraft

Since 1500, the art of statecraft has evolved from the personal, dynastic, and territorial preoccupations of Renaissance princes to a complex interplay of ideology, institutional design, and systemic affordances. In the 16th and 17th centuries, Machiavelli’s prescriptions codified realpolitik in the service of sovereign consolidation under the emerging Westphalian order, while Hobbes and Grotius later provided the conceptual scaffolding for centralized state power as a bulwark against disorder, formalizing the state’s monopoly on violence and the juridical architecture of interstate relations. The Enlightenment of the 18th century—through Locke’s social contract and Montesquieu’s separation of powers—redirected statecraft inward, embedding legitimacy in institutional balance, legal order, and representative accountability.

By the 19th century, industrialization, nationalism, and imperial networks expanded the toolkit of statesmen: Bismarck’s realpolitik, liberal constitutionalism across Europe, and the United States’ experiment with federalism illustrated how technological systems (railways, telegraphy, industrial production) were already beginning to delimit strategic autonomy. Throughout these centuries, the sovereign state largely emerged as the absolute master of its domain, enabling medium and small powers the luxury of self-management, relative isolationism, and the diplomatic ability to cleanly opt out of great-power friction while focusing on domestic affairs.

But what distinguishes the 20th and 21st centuries is not the emergence of constraint, but its qualitative transformation.

Power now flows through supply chains, financial clearing systems, cyber/digital infrastructure, and privatized technological ecosystems—domains in which states are influential but rarely sovereign. While post-World War II multilateralism, Keynesian economic architecture, and the Bretton Woods financial system provided a temporary scaffold, the acceleration of global interdependence, digitization, and AI-driven decision-making has produced tightly coupled systems whose disruptions propagate across sectors and borders. Medium and small powers now function under the shadow of hegemonic realpolitik, where choices—such as aligning both with the United States and China—are no longer discrete policy decisions but existentially systemic, reshaping domestic governance, trade policy, and medium term security strategy.

Modern statecraft is therefore less about sovereign survival and the management of a bounded polity and more the navigation of interlocking networks of power, in which foresight, technological literacy, and strategic anticipation of global cascades become central competencies. It is an AI accelerated version of what political scientists Henry Farrell and Abraham Newman describe as weaponized interdependence: a condition in which network centrality enables coercion, now intensified by the speed, scale, and adaptability of algorithmic systems.

As major AI powers—namely G2—are actively leveraging these transnational networks for geoeconomic statecraft imposing a top-down realpolitik, the concept of fully independent, domestic-facing states trembles. For historically non-aligned democracies such as Brazil, neutrality does not disappear but becomes unstable and continuously renegotiated, as even routine decisions—procurement of AI infrastructure, adoption of communication standards—carry embedded geopolitical commitments.

The stark reality for AI lagging states of western societies is that statecraft is no longer confined to balancing internal institutions; it involves a high-wire act of hedging domestic sovereignty against a deeply transformational global architecture they must friendshore but do not control.

Final Thoughts

In the era of AI statecraft, the “rent-a-brain” model will, at least temporarily, belong to a Sino-American duopoly. Remaining nation-states—then constrained in their ability to cultivate indigenous technological lineage—would do well to recognize that this period will become deeply inscribed in their algorithmic ancestry and digital provenance, shaping the boundaries of possibility for future generations.

This is a bad time to be corrupt, but not because of the reasons one may imagine though. Corruption leads to path dependency, which in effect, limits horizons, and we don’t have many of those available right now. For the United States and China, the wisdom demands reflection on how their historical narratives and ambitions will draw realities for the world of 2050. But for all other nations, their homework is no less demanding: constraints on resources and autonomy cannot justify a deficit of responsibility. Thus if smaller powers are destined to operate as satellites, they must nonetheless act as counterweights—reminding dominant actors of the principles they claim to uphold.

As AI systems now approach what the field terms recursive self-improvement/RSI, this renders global compute a continuously optimizing production layer, it is history’s largest software factory era. Yet states do not prosper on production alone. In political terms, RSI implies accelerated policy iteration, tighter institutional feedback loops, and deeper integration across historically siloed domains. It may inaugurate a new era—but likely only a transient one. In discussing institutional incrementalism, I recently organized my thoughts as follows:

A) Institutional friction has historically preserved legitimacy;

B) AI compresses decision, information and labor latency;

C) Friction thus becomes a strategic liability;

= AI introduces a governance capability overhaul.

Under such conditions, policy convergence, diffusion, and transfer cease to be gradual processes and instead become rapid, testable, and iterative.

There’s an old saying in AI fields that jokes that AI’s cognitive capabilities will eventually become analogous to its human counterpart, but only briefly—for at that point, the human ceases to be the reference point. Perhaps the same logic transfers to statecraft: AI capabilities will eventually match those of our civilizational constructs, at macro scales. And by then, to think of AI in terms of leaders and laggards will be preposterous and hopefully, obsolete.